Counterfactual Fairness by Combining Factual and Counterfactual Predictions

Oct 14, 2024·, ,,,·

0 min read

,,,·

0 min read

Zeyu Zhou

Tianci Liu

Ruqi Bai

Jing Gao

Murat Kocaoglu

David I. Inouye

Abstract

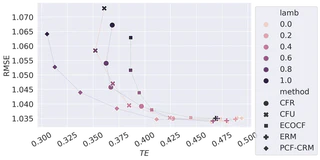

In high-stakes domains such as healthcare and hiring, the role of machine learning (ML) in decision-making raises significant fairness concerns. This work focuses on Counterfactual Fairness (CF), which posits that an ML model’s outcome on any individual should remain unchanged if they had belonged to a different demographic group. Previous works have proposed methods that guarantee CF. Notwithstanding, their effects on the model’s predictive performance remain largely unclear. To fill this gap, we provide a theoretical study on the inherent trade-off between CF and predictive performance in a model-agnostic manner. We first propose a simple but effective method to cast an optimal but potentially unfair predictor into a fair one with a minimal loss of performance. By analyzing the excess risk incurred by perfect CF, we quantify this inherent trade-off. Further analysis on our method’s performance with access to only incomplete causal knowledge is also conducted.

Type

Publication

NeurIPS 2024

Authors

Machine Learning Engineer

I am a Machine Learning Engineer on the AKI team at Apple, currently working on the LLM Summarizer of Siri. I earned my Ph.D. in Machine Learning at Purdue University supervised by Dr. David I. Inouye. My current work revolves around post-training techniques like SFT, RL, and Prompt Optimization.